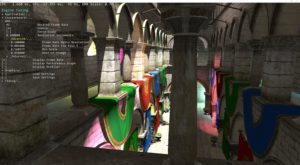

Recently I was having trouble reproducing a bug the perf team was running into, it had to do with a specific camera position in our workload. I decided the simplest and most time saving approach (for our purposes and for them) was to have them copy the camera data to a clipboard and email it to me – then I could reproduce the position exactly.

The msdn example code I found for copying data to the clipboard worked fine but it was a little overblown for the simple case I needed. I’ve boiled it down to just a few lines and I wanted to post it here in case anyone else wants to add this simple functionality to their application:

if ( ! OpenClipboard(hWnd) )

return;

EmptyClipboard();

char text[512] = "Your clipboard text (or data)";

size_t text_len = strlen(text);

// Allocate a global memory object for the text.

HGLOBAL hglbCopy = GlobalAlloc(GMEM_MOVEABLE, (text_len + 1) * sizeof(char));

// Lock the handle and copy the text to the buffer.

char *lptstrCopy = (char *) GlobalLock( hglbCopy );

memcpy(lptstrCopy, text, text_len * sizeof(char) );

lptstrCopy[text_len] = (char) 0;

GlobalUnlock(hglbCopy);

// Place the handle on the clipboard.

SetClipboardData(CF_TEXT, hglbCopy);

CloseClipboard( );